Benchmarking measures and rules

Benchmarking of solar radiation products can be done in different ways. If a kind of reference data is available which is assumed to be the “truth”, the modelled data sets can be compared and ranked how well they represent the reference data. But there is not always reference data available: e. g. for solar radiation spatial products (maps). Here benchmarking can assess the uncertainty of mapping products by their cross-comparison.

For site specific time series there are a number of different measures for benchmarking. A first set is based on first order statistics. These are the well known bias, root mean square deviations, standard deviations, their relative values to the average of the data set and the correlation coefficient. They compare how well data pairs at the same point of time compare with each other. They are important if one needs an exact representation of real data, e. g. for evaluations of real operating systems or forecasts of solar radiation parameters.

This exact match is not always important, e. g. for system design studies. Here the similarity of statistical properties as frequency distributions is more important than the exact match of data pairs. The MESoR project therefore suggests a number of parameters based on second order statistics [6].

Besides a common set of measures the selection of valid pairs of data is of importance to achieve comparable results. Valid data pairs should have passed the quality control procedure as described above, measured global horizontal ground data should be above zero (valid measurement, sun above the horizon) and the modelled data should be valid. Average values (e. g. for relative bias or standard deviations) are calculated based on the valid data pairs. If averages from multiple stations are to be calculated, all data pairs should be treated with the same weight. Averages should be calculated from the complete data set and not from the single stations results. This gets relevant if the stations have different numbers valid data pairs.

A benchmarking exercise applying the measures and rules to available data bases within Europe will be done in the second half of 2008.

Benchmarking of angular distributions is yet difficult, as there are a number of different instruments available with very different characteristics in terms of number of sensors, acquired parameters

(irradiance, radiance, luminance), geometry of the measurement directions in the sky hemisphere, spectral sensitivity of the sensors, aperture angle, size and shape and the sensors and the sensors linearity and dynamics. Further, there are only very few measurements available so far.

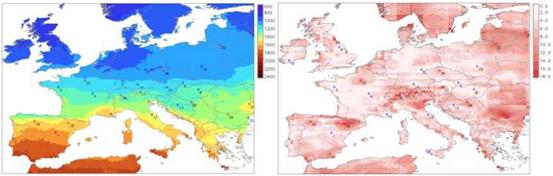

Solar maps can be benchmarked in two ways, either point based or map based. The point based benchmarking is similar to the time series benchmarking. Data is extracted from the maps and compared to the measurements (“ground truth”). First and second order statistics can be applied. Map based cross comparison of solar radiation provides means for improved understanding of regional distribution of the uncertainty by combining all existing resources (calculating the average of all) and quantifying their mutual agreement by the means of standard deviation. A sample evaluation has been done with five spatial data bases: ESRA, PVGIS, Meteonorm, Satel-light and NASE SSE.

|

Fig. 1: Yearly sum of global horizontal irradiation, average of all five data bases [kWh/m2] (left), uncertainty at 95% confidence interval from the comparison of the five data bases. [%] (right). |

A set of benchmarks has been proposed to evaluate solar radiation forecasts. Depending on the application different methods to assess prediction quality are appropriate. For the users of the forecast the verification scheme should be kept as simple as possible and be reduced to a minimum set of accuracy measures. Statistical measures are similar to time series but need to be adapted to forecast specific issues. Forecast should not only be evaluated against the measured data but also against reference models as persistence or autoregressive models to show the advantage of a forecasting system. For forecasting not only the quality of the prediction of a single site is of interest but also the accuracy of prediction of an ensemble of distributed sites e. g. feeding into an electricity grid node.